Who Controls the Digital Public Square?

Europe’s clash with X reflects a deeper struggle over power, transparency and democratic oversight.

What is at stake is not whether speech is permitted, but whether power over visibility, reach and accountability remains concentrated in private hands. As digital platforms increasingly function as gatekeepers of public life, Europe’s effort to impose transparency marks an early test of democratic oversight in the algorithmic age. The outcome will shape not only regulation, but the future balance between markets, citizens and institutions.

In December 2025, the European Commission imposed a €120 million fine on the social media platform X, owned by Elon Musk, for violations of the EU’s Digital Services Act (DSA). The decision marked the first fully completed enforcement ruling under Europe’s landmark digital law and it immediately triggered a political and rhetorical escalation far beyond the scope of the case itself.

At issue was not political speech, opinions, or content moderation, but transparency: how a major digital platform documents its decisions, explains the functioning of its systems, and enables users and researchers to understand and contest how visibility and reach are governed in the digital public sphere.

What followed, however, transformed a regulatory enforcement into a broader confrontation over legitimacy and power. Elon Musk responded by publicly calling for the abolition of the European Union, reframing a narrowly defined legal decision as an existential attack on free expression and sovereignty.

The episode revealed a deeper struggle: not over whether speech is permitted, but over who controls visibility, reach, and accountability in a digital environment increasingly dominated by private platforms. As social media companies function as gatekeepers of public life, Europe’s effort to enforce transparency obligations has become an early test of democratic oversight in the algorithmic age. The outcome will shape not only digital regulation, but the future balance between markets, citizens, and institutions.

At first glance, the decision appeared administrative: a €120 million fine imposed by the European Commission on the platform X under the Digital Services Act. A ruling grounded in transparency obligations, adopted through due process, and applicable to all platforms operating within the European Union.

What followed, however, revealed far more than a dispute over regulatory compliance. It exposed a collision between private digital power and democratic authority: a confrontation over who sets the terms of accountability in the digital public sphere.

To assess the scope and intent of the decision, I have reached out to the European Commission, including spokesperson Thomas Regnier, as well as to Věra Jourová, former Vice-President of the European Commission and a pioneer for digital rights in Europe.

The escalation was immediate and disproportionate. A regulatory enforcement was reframed as an existential threat, transforming a narrow legal decision into a symbolic struggle over legitimacy, power, and control over speech infrastructure.

Musk’s call to abolish the European Union did not engage with the legal findings of the case or the specific transparency failures identified by the Commission. Instead, it represented a familiar form of anti-institutional escalation: a strategy in which regulatory oversight itself is portrayed as illegitimate in order to evade scrutiny.

Such reactions are characteristic of unregulated power centers when confronted with external constraints. Rather than contesting the substance of the decision, the authority imposing limits is reframed as the problem. In this inversion, accountability becomes oppression, and rule enforcement is cast as ideological repression.

The escalation was not accidental. It functioned as a form of delegitimization through exaggeration — expanding a narrow regulatory dispute into a civilizational conflict, thereby shifting attention away from transparency obligations and toward a broader narrative of institutional overreach.

Rules, Not Speech

From the Commission’s perspective, the case is deliberately narrow. Officials insist that the decision is not about speech, opinions, or political content.

Thomas Regnier, the Commission spokesperson, stated unambiguously:

“This decision has nothing to do with content moderation. The decision is about transparency provisions for citizens in the European Union.”

This distinction lies at the core of the Digital Services Act. The DSA does not instruct platforms what to remove. It regulates how platforms explain their actions, how decisions are documented, and how citizens can contest unjustified restrictions.

The Commission has rejected accusations that the law amounts to censorship.

“The DSA and our digital legislation have nothing to do with censorship,”

the spokesperson said, stressing that this position has been clear since the beginning of the mandate.

Who Moderates Content

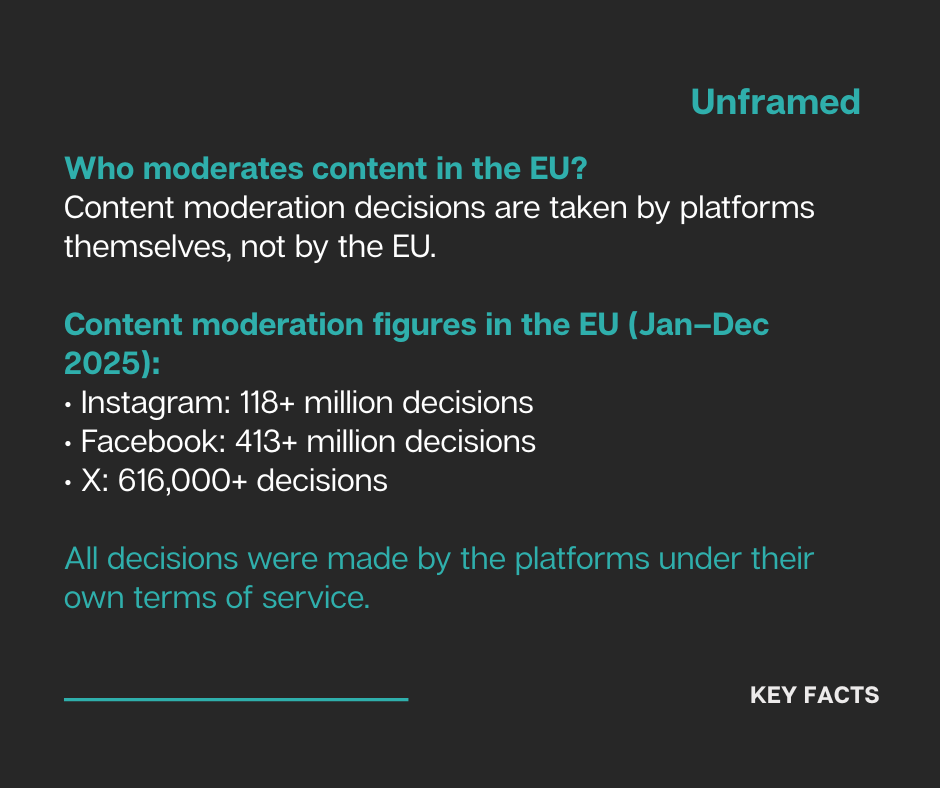

To counter claims of political interference, the Commission pointed to scale and responsibility.

In 2025 alone, platforms operating in the EU took vast numbers of moderation decisions under their own terms and conditions.

“Instagram took more than 118 million content moderation decisions in the EU,” the spokesperson noted.

“Facebook took more than 413 million content moderation decisions… X took more than 616,000.”

All of these decisions, the Commission emphasized, were taken by the platforms themselves, not by EU institutions.

“These are facts,” the spokesperson underlined, adding that the EU’s aim is not to direct moderation, but to ensure fairness.

“We want platforms to enforce their terms and conditions and to make sure that our citizens can fight back against unjustified content moderation decisions.”

The emphasis is on user rights, not editorial control.

When Power Meets Limits

Nevertheless, the reaction to the ruling revealed how sensitive those limits remain. Musk’s call to abolish the EU did not engage with the technical findings of the case. Instead, it reframed regulatory enforcement as ideological repression.

Věra Jourová, former Vice-President of the European Commission, and a pioneer in Digital Rights, reads this inversion as a familiar historical reflex. The digital rules, she notes, were not imposed lightly.

“The digital rules have been democratically adopted after years of very broad debate on whether and how to regulate the digital space.”

By the end of that debate, the idea of leaving the digital sphere entirely untouched had largely collapsed under empirical evidence.

For Jourová, the claim that freedom of expression is being curtailed misses the point.

“Freedom of expression has not been limited at all,”

she argues, pointing out that the DSA builds on already existing legal frameworks rather than inventing new speech prohibitions.

What has changed is not what platforms may say, but whether they must explain themselves.

Due Process, Not Targeting

Against the political rhetoric, the Commission continues to emphasize procedural restraint.

“We are not targeting any company or jurisdiction,”

the spokesperson stated.

“We base our decisions on due process.”

The DSA, officials stress, applies only within the European Union and applies to all platforms operating there. Enforcement follows evidence, not ideology.

“When we are ready to adopt a decision, we adopt the decision,”

Regnier underlined the Commission’s insistence on legal sequence rather than political reaction.

The Algorithmic Public Sphere

Beyond the immediate enforcement lies a quieter reality. Power in today’s digital public sphere is rarely exercised through outright bans. It operates through algorithms, ranking systems, and visibility controls. Content is not necessarily removed. It is deprioritized. Accounts are not silenced. They are made marginal.

This form of governance leaves little trace and offers limited recourse. It shapes behavior indirectly, encouraging self-regulation through uncertainty rather than command.

Here, the DSA intervenes not to judge ideas, but to illuminate systems.

Media and Democratic Resilience

For Jourová, the implications reach far beyond platforms themselves.

“Never before has it been so vital to have free and capable media,” she warns, noting that journalism now depends on infrastructures it cannot control and economic models that increasingly erode its sustainability.

The European Media Freedom Act, now enforceable, seeks to counter platform interference and discriminatory algorithmic treatment. Yet Jourová cautions that regulation alone will not restore a healthy public sphere. Without investment in journalism and public awareness, even the strongest legal frameworks remain fragile.

Beyond the Fine

Stripped of rhetoric, the X case is not about censoring speech. It is about whether private digital power accepts democratic oversight.

The Commission insists it is enforcing rules, not policing ideas. Jourová frames the backlash as resistance to limits themselves. Musk’s reaction exposes how deeply contested those limits have become.

Between these positions lies a defining question of the digital age.

Not whether speech is allowed,

but who controls visibility,

who evades accountability,

and whether democratic institutions still have the authority to govern the infrastructures that shape public life.

Taken together, the case suggests that Europe is testing new ways of asserting democratic oversight in the digital sphere, not as a final answer, but as a beginning.

.